Let me be upfront about something. When I started digging into how universities handle student data, I genuinely expected to find a mixed bag. Some good, some bad, nothing too shocking. What I actually found was a picture far messier than most institutions would like to admit publicly.

The Data Challenge Universities Actually Face

Higher education has a data complexity problem that’s genuinely different in scale from most other sectors. On a single campus, you’ve got admissions offices, finance departments, health centers, libraries, research labs, and dozens of other units, all touching personal data in ways that rarely get coordinated. Then the General Data Protection Regulation arrived in 2018 and essentially said: sort it out, or pay for it.

Some universities sorted it out. Others are still finding their footing, six-plus years on.

That’s not a criticism. It’s a structural reality. A retailer knows roughly what data it holds and why. A university processes the personal information of students, staff, alumni, research participants, visiting scholars, applicants who never enrolled, donors, and contractors, often simultaneously, often under completely different legal bases. The scope is genuinely daunting.

Student records alone are an iceberg. Grades, health accommodations, financial aid history, disciplinary notes, mental health referrals. Each category carries its own sensitivity tier under the regulation, and special category data (health information, for instance) requires explicit protections that can’t be bolted on as an afterthought.

Research is where things get particularly complicated. Academic research can receive a degree of flexibility under GDPR, but that flexibility comes with strings attached: ethics board approval, data minimization obligations, retention schedules that actually get followed. A number of research departments are still operating on informal data management practices, and the gaps show.

What GDPR Actually Requires (Without the Legal Fog)

When people talk about GDPR compliance, they tend to either catastrophize it into a terrifying bureaucratic maze or wave it away as box-ticking. Neither framing is accurate.

At its core, the regulation asks institutions to answer some fairly practical questions. Do you know what personal data you hold? Where it lives? Who can access it? Under what legal basis are you processing it? And how long are you keeping it before you delete it?

That last one trips universities up constantly. Retention schedules exist on paper at most institutions. Whether the systems actually enforce deletion is a different conversation entirely.

Then there’s the rights side of things. Individuals, including students and staff, have the right to access their data, correct inaccuracies, request deletion under certain circumstances, and object to specific types of processing. Universities need workable processes for handling these requests, not a generic email address that someone checks when they get around to it.

Lawful basis matters too. There’s a tendency in higher education to lean on legitimate interests as a catch-all justification for data processing, but that’s not how it works. Processing needs a clearly identified basis, documented before the processing starts, not reverse-engineered after a complaint arrives.

The Consent Problem on Campus

Consent under GDPR must be freely given, specific, informed, and unambiguous. That last word does a lot of work.

The challenge with universities and consent is structural. When a student applies for a place, registers for courses, or uses campus health services, there’s an inherent power imbalance at play. Can a student genuinely refuse to consent to data processing when saying no might affect their access to university services? GDPR is fairly clear that where a meaningful power imbalance exists, consent isn’t a reliable legal basis. Yet plenty of institutions still rely on it for processing activities where a stronger basis would be more appropriate.

Alumni engagement sits in particularly awkward territory. Sending a fundraising appeal to a graduate decades later requires a legitimate legal basis.

“We’ve always done it” isn’t one. Neither is a pre-ticked opt-in buried in a registration form from years ago.

Third-Party Vendors and the Supply Chain Nobody Talks About

Universities run sprawling technology ecosystems. Student information systems, virtual learning environments, library databases, HR platforms, research repositories, catering apps. Behind each of those sits a vendor processing personal data on the institution’s behalf.

Under GDPR, data processors must have written agreements in place with controllers. Those agreements need to specify what data is being processed, for what purpose, under what security standards, and what happens to the data when the contract ends.

In practice, many universities have signed vendor contracts where the data processing terms weren’t exactly scrutinized closely. US-based edtech vendors in particular sometimes arrive with terms drafted for a pre-GDPR world, or for US legal frameworks that don’t map neatly onto European requirements. Negotiating those terms retroactively is tedious and uncomfortable. But it’s necessary.

The Schrems II decision added another layer of complexity. Transferring personal data outside the UK or EU requires additional safeguards: standard contractual clauses, transfer impact assessments, the full apparatus. International research collaborations and cloud services with US data centers both fall squarely into this territory.

Breach Notification: The 72-Hour Clock Nobody Loves

A personal data breach must be reported to the relevant supervisory authority within 72 hours of the institution becoming aware of it. Not three working days. Seventy-two hours, including weekends.

Universities experience breaches. Phishing attacks that compromise staff email accounts. Misconfigured databases. Laptops stolen from cars. The breach itself isn’t always the compliance failure. The failure is not having the detection and reporting processes in place so that when something goes wrong, the right people know quickly and the clock starts accurately.

Data protection officers at universities often describe the internal challenge as a political one. When a breach happens, the instinct from senior leadership is frequently to investigate quietly before involving regulators. That instinct is understandable. It’s also legally risky. The 72-hour requirement isn’t aspirational.

Where Universities Actually Stand

The honest answer is: it depends enormously on the institution.

Some large research universities have invested significantly in data protection infrastructure. Dedicated DPOs with real authority, properly resourced information governance teams, institution-wide data audits, staff training that goes beyond an annual click-through module. These institutions are in a reasonably strong position.

Smaller institutions, often with less resource and less specialist expertise, sometimes find GDPR compliance still sitting largely with whoever got handed the responsibility without the budget or headcount to match it. That’s a resourcing problem as much as a knowledge one.

The UK’s Information Commissioner’s Office has taken enforcement action in higher education. Fines have been issued. Reprimands published. The sector isn’t flying under the radar.

What Good Actually Looks Like

The institutions handling this well tend to share a few common traits. Data protection is genuinely embedded in how decisions get made, not consulted at the end of projects as a compliance sign-off. Their Records of Processing Activities document is actively maintained and regularly reviewed. Staff who handle sensitive data receive meaningful training rather than performative e-learning. And the DPO has a direct line to senior leadership, not a reporting line buried three layers down in legal or IT.

Perhaps most importantly, these institutions treat GDPR compliance as an ongoing discipline rather than a project with a finish line.

The data landscape keeps changing, the technology keeps changing, and the regulation, while stable in text, continues to be interpreted in new ways through enforcement decisions and case law.

Building systems robust enough to adapt to that reality is, ultimately, the whole game.

Structured content distribution is the decoupling of content from presentation through a headless CMS and Content as a Service (CaaS) architecture. It is a sound strategy for organizations managing complex content distribution networks across multiple channels.

To be the most successful, this digital transformation requires organizations to change both their publishing workflows and their content ownership structures. Governance complexity affects 41% of CaaS adopters (PDF), workflow mismatches impact a third, and training requirements average 14 to 18 weeks.

We have implemented these systems for clients in healthcare, financial services, and higher education, and the pattern is consistent: the three failures that kill structured content initiatives are the preview gap, the ownership vacuum, and the training deficit. Here is what we have learned about each one — and what actually works.

The Promise

The pitch for structured content distribution is compelling: create content once, store it as modular data in a headless CMS, deliver it via API to any channel (web, mobile, kiosks, AI agents) without reformatting. The CaaS market is projected to reach $2.8 billion by 2035, and over 65% of enterprises have adopted headless CMS architectures.

What they do not tell you is that integration challenges affect 46% of adopters using legacy CMS platforms, and that 31% of enterprises encounter deployment delays exceeding six months. The technology works, but the governance requires just as much attention and is often overlooked. We have seen this avoidable pattern repeat across many structured content implementations.

Why Do Structured Content Migrations Stall?

In short, because organizations implement the technology without redesigning how their teams create, review, approve, and own content. That’s the governance problem.

A headless CMS decouples content from presentation. But most editorial teams have spent years, sometimes decades, working in systems where creating content and seeing how it looks are the same activity. WordPress, Drupal, and even SharePoint have a visual editing experience: build a page, see the page, publish the page.

Structured content does not work this way. Authors fill in fields like title, body, metadata, and related entries to publish content objects, not pages. As one analysis of Contentful’s editorial interface notes, “content editors work in structured content entry forms without seeing how content will render in production.” The front-end determines how those objects appear to users.

That architectural distinction is the correct one for consistent omnichannel delivery. It is also the one most likely to break editorial workflow expectations when teams do not deliberately plan for this big shift. In our experience, three governance failures account for the vast majority of structured content stalls.

What Is the Preview Gap, and Why Does It Derail Teams?

The preview gap is the loss of visual context that editorial teams experience when moving from a WYSIWYG (what you see is what you get) environment to a structured content interface, and it is the most immediate friction point in any headless CMS migration.

Authors who previously built pages visually are now filling in form fields and trusting that a front-end will render them correctly. The shift from “building a page” to “managing a content object” takes adjustment, and “once teams adapt, the structured approach tends to produce more consistent, reusable content.” The problem is what happens before they adapt.

What happens is that authors create workarounds. They paste formatted content into rich text fields, breaking the structured model. They submit tickets to developers asking “what will this look like?” multiple times per week. They maintain shadow documents in Google Docs so they can see their work in context. Every workaround is a governance failure — content that exists outside the system, formatting that undermines the content model, and developer time consumed by preview requests instead of feature development.

The planning that pays off includes building live preview environments for as many content sources as possible. This development work typically gets deprioritized because it is not user-facing, but it determines the success of the new system. As one migration guide puts it, headless platforms deliver excellent editorial experiences “when configured correctly — visual editing, live preview, flexible page-building, role-based permissions. But that configuration is work, it doesn’t happen by default.” Budget for it, build it first, and do not launch editorial access without it.

What Is the Ownership Vacuum?

The ownership vacuum is what happens when structured content crosses departmental boundaries without clear governance over who maintains the content model, who approves changes to shared components, and who is accountable when content is reused in a context the original author never intended.

In a traditional CMS, the marketing team owns the marketing pages, the product team owns product pages, etc. Structured content breaks this model deliberately — a product description created once might appear on the website, in a mobile app, in an email campaign, and through a chatbot simultaneously. But governance complexity affects 41% of CaaS adopters, and multi-team collaboration across 6 to 10 departments increases governance overhead by 27%.

Questions seldom asked include:

- When the compliance team changes a regulatory disclaimer, who is responsible for verifying that the change renders correctly across every channel consuming that content object?

- When marketing adds a field to the product content type, who assesses the downstream impact on the mobile app and the support knowledge base?

We have seen organizations discover these questions six months post-launch, usually during a content audit that reveals inconsistencies no one can trace. In regulated industries — healthcare, financial services, higher education — those inconsistencies are compliance risks.

Knowing these pitfalls ahead of time can lead to the establishment of a content model governance board before migration begins. A small, cross-functional group (typically 3 to 5 people spanning content strategy, development, and compliance) owns the content model as a shared organizational asset. They approve changes to content types, evaluate reuse implications, and maintain a living inventory of where shared content objects appear. This role does not exist in traditional CMS organizations because it’s not needed. But in structured content environments, it is absolutely necessary.

Why Does the Training Deficit Compound Everything?

Because organizations allocate 90% of their transformation budgets to technology and implementation, and only 10% to change management — the part that determines whether anyone actually uses the system they built.

Training requirements for CaaS implementations average 14 to 18 weeks, the elapsed time from initial exposure to genuine editorial fluency. This training creates the confidence for authors to create, structure, and publish content without reverting to old habits or filing developer tickets. Most implementation budgets account for a one-day training session and a knowledge base article. The gap between that and actual fluency is where adoption dies.

The compounding effect of the training deficit makes this particularly damaging. Undertrained authors hit the preview gap and panic. Without clear governance ownership, there is no one to answer their questions authoritatively. They build workarounds. Those workarounds corrupt the content model. The corrupted content model undermines the case for structured content. Stakeholders lose confidence. The transformation stalls.

BCG’s study of 850+ companies found that only 35% of digital transformations meet their value targets globally. The failure rate is a change management problem that looks like a core problem with the technology itself.

To avoid this failure spiral, structure editorial onboarding as a phased engagement, not a one-and-done event. In our implementations, we start with a pilot group of 3 to 5 authors working with the system while the front-end is still being built. They surface friction points the development addresses in real-time. When the broader editorial team is onboarded, the common pain points have been resolved, and the pilot group serves as advocates who can answer questions and support their peers. This approach adds little cost and dramatically improves adoption velocity.

What Should Organizations Do Before Starting a Structured Content Migration?

Treat governance design as a foundation to build a successful digital transformation:

- Audit your editorial workflows as they actually operate. Map who creates content, who reviews it, who approves it, and where informal workarounds exist. As one migration planning guide advises, most publishing workflows “are often based on legacy systems, informal approvals, or staff availability. The result? Delays, missed steps, and content that never quite gets finished.” Your structured content governance must account for the real workflow, not the theoretical one.

- Define content model ownership before selecting a platform. Determine who will own the content model as an organizational asset, who can request changes, and what the approval process looks like. This governance structure should be platform-agnostic — it is an organizational decision, not a technical one. We have helped clients build this through our roadmapping and strategy engagements, and it consistently reduces mid-project governance confusion.

- Budget for editorial experience parity. If your authors currently have WYSIWYG editing, live preview, and visual page building, do not assume they will accept a simpler and more limiting form-based interface. Calculate the development effort required to provide contextual preview in your new architecture and include it in the implementation scope, not as a phase-two enhancement. Phase two rarely arrives before editorial frustration does.

Wrap Up

The CaaS pitch is not wrong. Structured content distribution is the right architecture for organizations publishing across multiple channels, and it is increasingly the right architecture for AI readiness — structured data is what AI systems consume most effectively. But the promise underestimates the organizational effort to make it successful.

Technology is the easy part. Governance, training, and editorial adoption are harder, and that is where implementations succeed or fail.

We have built these systems on Contentful, Drupal, and composable architectures for organizations in regulated industries where getting content wrong has real consequences. The lesson we keep relearning is the same one: start with the team, not the platform.

Summary

On April 20, 2026, the Department of Justice extended ADA Title II web accessibility compliance deadlines by one to two years for state and local government entities. The extension does not pause underlying accessibility obligations, and it does not extend the separate HHS Section 504 deadline that may apply to hospitals and nonprofits receiving federal funding. Organizations tracking a single calendar are exposed to what we call the two-deadline trap. The right response is to use the extension to systematize accessibility, not to defer it.

This article is not legal advice. Confirm which rules apply to your organization with qualified counsel.

Four days before the original compliance date, the DOJ reset the clock.

Per a summary from Jackson Lewis, state and local governments with populations over 50,000 now have until April 26, 2027 to comply with WCAG 2.1 Level AA under Title II. Smaller entities and special districts have until April 26, 2028.

If you run digital for a public entity, exhale. If the extension made you slow down, recalibrate. The deadlines moved, but the risk did not.

What actually changed, and what did not?

The DOJ pushed back the date that specific technical requirements become enforceable.

What did not change: the underlying ADA obligation to provide accessible programs and services. Title III public-accommodation risk for hospitals, providers, and nonprofits is unaffected. Demand letters and accessibility-related litigation continued straight through the extension announcement; they did not pause for it.

The compliance date is a deadline, not a start date. Organizations that wait will spend the extension period accumulating debt in templates, content, and vendor contracts, then attempt to remediate it in a sprint. That sprint is where the avoidable risk lives.

Why doesn’t the extension help hospitals and nonprofits?

The DOJ rule covers state and local government entities. It does not cover hospitals and nonprofits whose accessibility obligations come from a different source: federal financial assistance under Section 504 of the Rehabilitation Act.

Jackson Lewis notes that HHS has a separate Section 504 web accessibility compliance date, and as of this writing it has not been extended. Until HHS acts, plan as if it holds.

If your organization receives HHS funding, operates patient portals, runs scheduling or billing flows, accepts donations online, or hosts learning and event platforms, your timeline is likely shorter than the DOJ headline suggests. Title III public-accommodation exposure runs alongside it.

What is the two-deadline trap?

The two-deadline trap is the assumption that a single, well-publicized accessibility deadline is the only one that applies to your organization.

It happens when leadership tracks the DOJ Title II extension and treats it as the program’s primary clock, while a separate Section 504 or Title III obligation governs the actual exposure. The result is a roadmap pegged to the wrong date and a remediation budget that arrives late.

Avoiding it requires confirming, in writing and with counsel, which rules apply, which deadlines govern, and which user-facing services fall inside each scope.

What does day-one compliance actually look like?

Day-one compliance is the day your organization can demonstrate that new content is published accessibly, high-impact user flows work with assistive technology, vendors are managed as part of your posture, and governance is in place.

In our experience working with regulated organizations, the failure mode is rarely the homepage. It is the publishing system that keeps creating new accessibility debt — new pages, new PDFs, new embedded forms, new third-party widgets — faster than remediation can clear it. A defensible program stops the inflow before it works down the backlog.

That means accessibility moves upstream into design system components, CMS templates, content briefs, QA gates, and vendor intake. “Archived content” stops being a folder name and becomes a governance decision with rules. Procurement language changes so the next contract renewal does not lock in another year of vendor risk.

Will an accessibility overlay protect you?

No. Overlays can adjust some visual and interaction settings for some users, but they do not remediate the underlying barriers in your templates, components, content, or third-party tools. The Overlay Fact Sheet, signed by hundreds of accessibility practitioners and organizations, documents the consensus position.

If a widget is your strategy, assume you still need code-level fixes in templates, manual testing with assistive technology, content authoring training, and a third-party tool plan. The widget is not a substitute for any of those, and a number of overlay vendors have themselves been named in accessibility lawsuits.

What should accessibility leaders do this week?

Five actions, in order.

- Confirm which rules apply, and which deadline governs. Title II, Section 504, Title III, or more than one. If there is uncertainty, this is a counsel question, not an internal one.

- Name a single accessibility owner. Not a committee, but one person responsible for coordinating across IT, content, legal, and procurement. Accountability is the program.

- Test your top five user-critical flows manually. Forms, authentication, scheduling, payments, donations, patient portal — whatever blocks access to your primary services. Manual keyboard-only and screen reader software spot checks find what automated scanners miss.

- Inventory third-party tools and audit their contracts. Where contracts are silent on accessibility, flag them as priority renewals. Your compliance posture runs through every embedded vendor whether the contract says so or not.

- Write a 90-day plan and share it with leadership. Specific, resourced, and tracked beats comprehensive and aspirational every time.

The extension is not a year off. It is a year to put a defensible program in place before the rules apply more explicitly than they already do. Use it wisely.

Bill Gates wrote “Content is King” back in 1996. He was right for about thirty years. On the open web, the winners were the ones who could produce, distribute, and monetize content at scale. That era shaped how we built digital products, how we organized marketing teams, and how we thought about content platforms.

That era is getting a new chapter.

When content becomes context

In the age of agents, content is context. It’s the raw material an AI uses to answer a customer’s question, draft a proposal, summarize a policy, or make a decision on behalf of your business.

If your context is a mess, your agent is a mess. Garbage in, confident-sounding garbage out.

For organizations in healthcare, higher education, and associations (industries where we work every day) that governance layer isn’t a nice-to-have. A health system deploying an agent to answer patient questions needs to know which clinical protocol is current, who approved it, and what the agent is and isn’t allowed to cite. An association managing member benefits can’t afford an agent that surfaces a two-year-old policy document as current guidance. And it’s not just the regulated organizations themselves. The enterprise technology companies that serve these industries, the SaaS platforms, the data providers, the system integrators, face the same challenge: if the content powering their products isn’t structured and governed, the agents built on top of it will inherit every gap. The stakes in regulated industries make the content-as-context problem concrete and urgent, but the same dynamics show up everywhere brand, voice, and accuracy matter: retail pricing, financial disclosures, B2B product specifications, public sector policy. Different risk profiles, same fundamental problem.

This isn’t theoretical. Gartner predicts that 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% in 2025. The shift is already moving from prediction to product.

The platforms we work with every day show the movement clearly. The Drupal AI Initiative launched last June and hit $1 million in funding within five months, with the Drupal AI and AI Agents modules reaching production-ready status in October 2025. Acquia built on that foundation with Acquia Source, shipping three AI agents for its Drupal-powered SaaS CMS in December. Contentful open-sourced its MCP server and has been publishing active guidance on agentic content operations. These aren’t experiments. They’re shipping.

Across the category, the pattern is broad. Contentstack launched Agent OS in September 2025 and introduced what it calls the “Context Economy” as its positioning. Kontent.ai shipped what it calls an Agentic CMS the following month. The Model Context Protocol that Anthropic introduced in late 2024 has become the connective tissue, adopted by OpenAI, Google DeepMind, and most of the CMS world.

The platforms are ready. The question is whether your content is.

What agents actually need

An agent doesn’t want a rendered web page. It wants structured, canonical, permissioned, versioned truth. That means:

- Structure so the agent can reason over content rather than scrape through marketing copy

- Versioning so it knows which policy, price, or product spec is current

- Permissions so the agent answering a customer question can’t pull from an internal-only HR doc

- Freshness signals so stale content doesn’t get treated as authoritative

- Governance so legal, brand, and compliance can trust what the agent says on their behalf

That’s the same job a mature content platform has been doing for years, just pointed at a new kind of consumer.

We’ve seen this movie before

Every channel shift exposes whether your content was ever really structured to begin with. CD-ROM, then the web, then mobile, now agents. Each one forces organizations to untangle content from presentation. Headless CMS platforms like Drupal, Contentful, Sanity, and Strapi won that argument. Content as structured data, delivered via API, rendered wherever you need it.

Agents are the most demanding channel yet. They don’t just display your content. They consume it, reason over it, and then take action. If your content is trapped inside HTML blobs or buried in PDFs that no one’s touched since 2021, it’s not ready to be context. Structure is the whole game now.

Where context lives today

Right now, company context is scattered across:

- Websites and headless CMS platforms

- GitHub repos full of markdown

- Confluence, Notion, SharePoint, Google Drive

- Salesforce, HubSpot, and a dozen other systems of record

- PDFs, Slack threads, and somebody’s laptop

Some of these are built for governance. Most aren’t. GitHub is hands-down great for technical content and version control, but marketing and legal teams aren’t opening pull requests to update a pricing page. Notion is excellent for collaboration, weak on structured content models and role-based delivery. Every organization I talk to has some version of this scatter, and it’s about to become a much bigger problem.

The rise of the Context Management System

The old acronym still works. CMS. New job.

Headless CMS platforms have quietly solved about 70% of what agents need. Structured content models. API-first delivery. Editorial workflows. Roles and permissions. Versioning. Audit trails. What they’re adding now is the connective tissue. Acquia is embedding AI agents directly into Drupal-powered workflows through Acquia Source, and Contentful has open-sourced its MCP server to let agents take action on content operations. Across the rest of the category, Sanity launched its Content Agent in January 2026, and Storyblok, Brightspot, and dotCMS have released MCP servers of their own. MCP servers, vector indexing, semantic metadata, agent-optimized delivery endpoints. That’s a much smaller leap than building the whole governance layer from scratch.

The “just throw it all in a vector database” approach has real merit as a retrieval layer. Retrieval is one job. Governance is a different one: who owns canonical truth, who approved the content, when it expires, and who’s allowed to see it. That’s always been the CMS job. It matters more now, not less.

For teams working on Drupal, Contentful, or Acquia Source, this is encouraging. The architectural decisions those platforms made years ago (structured data, granular revisioning, API-first design) turn out to be exactly what AI agents need. Your investment in content architecture is paying off in ways you didn’t plan for. Call it a head start.

What to do about it

If you’re building agentic products, or planning to, the content question is the quiet one that will bite you later. This is the work we’re spending most of our time on with clients right now. A few forward moves:

- Audit where your content actually lives and who owns it. You will be surprised.

- Pick a source of truth for each category of content. Don’t let five systems claim the same ground.

- Get your structured content models right. If your content is trapped inside HTML, it isn’t ready to be context.

- Build the governance layer before you need it. Versioning, permissions, approval workflows. Your legal team will thank you. So will your agent.

- Connect your CMS to your agents via MCP or equivalent. This is how context flows. Do it early.

Content was king when the battle was for attention. Context is king now that the battle is for correctness. Agents are only as good as the material you feed them, and that material has to be managed with the same rigor we’ve applied to code, to data, and yes, to content itself.

The organizations that treat content governance as infrastructure, not a cleanup project, will be the ones whose agents are trustworthy from day one. That window is shorter than it looks.

Summary

Most organizations are treating SEO and Generative Engine Optimization as two separate disciplines – and wasting resources in the process. The real strategic question is not which channel to optimize for but whether your content is built to be reused: extracted, synthesized, and cited by both search algorithms and AI answer engines. We call this Citation-Ready Content Architecture – a unified approach where structure, authority, and specificity make content perform across every discovery surface simultaneously. Organizations in regulated industries face compressed timelines: healthcare queries already trigger AI Overviews on nearly half of all searches.

Sixty percent of Google searches now end without a click. That number is not a forecast – it is a 2025 finding from Bain & Company. Meanwhile, Gartner predicts traditional search volume will drop 25% by the end of 2026 as users migrate to AI-powered answer engines. And here is the statistic that should change how you think about your content strategy: according to Ahrefs, 80% of URLs cited by ChatGPT, Perplexity, and Copilot do not rank in Google’s top 100 results for the original query.

That last data point is the one most SEO-vs.-GEO articles ignore. If the overlap between traditional rankings and AI citations were nearly complete, you could optimize for one and trust the other to follow. It is not. The two discovery channels draw from overlapping but meaningfully different content signals. Treating them as a single problem or two separate problems are both the wrong framing.

Why Is the “SEO vs. GEO” Framing Wrong?

Because it implies a choice between two competing strategies, when what actually matters is a single architectural principle applied across both.

SEO optimizes content for ranking position – getting your page onto a results list a human scans and clicks. GEO – Generative Engine Optimization, a term formalized by researchers at Princeton, Georgia Tech, and IIT Delhi in 2024 – optimizes content so AI systems can retrieve, synthesize, and cite it when generating answers. The Princeton study demonstrated that GEO techniques can boost content visibility in AI-generated responses by up to 40%, and that the most effective strategies vary by domain.

The difference is real. But the industry conversation has overcorrected, treating GEO as something exotic that requires a fundamentally new playbook. As Entrepreneur reported in April 2026, teams are making preventable mistakes by treating GEO “like an exotic new discipline” and shifting budget away from technical SEO into untested “AI visibility hacks.” Research from AirOps found that pages ranking number one in Google were cited by ChatGPT 3.5 times more often than pages outside the top 20.

Strong SEO remains the foundation. GEO is the structural extension that makes your existing authority legible to AI systems. They are not two strategies. They are one architecture.

What Makes Content “Citation-Ready” for Both Search and AI?

Citation-Ready Content Architecture is the practice of structuring content so it simultaneously ranks in traditional search results and gets extracted and cited by AI answer engines. It is not a new technology stack or a separate editorial workflow. It is a design principle: every piece of content your organization publishes should be built for reuse from the start.

Three characteristics define citation-ready content:

Modular structure. AI systems do not read your article top to bottom and decide whether to cite the whole thing. They extract passages – a definition, a statistic, a direct answer to a question. Content with clear headings, self-contained sections, and answer-first paragraphs gives both search engines and AI systems clean material to work with. The Princeton GEO study found that adding statistics to content improved AI visibility by 41%, and citing credible sources improved it by 115% for lower-ranked pages.

Demonstrated authority. Seer Interactive’s September 2025 study of 3,119 queries across 42 organizations found that brands cited in AI Overviews earned 35% more organic clicks and 91% more paid clicks than those not cited. Authority is no longer just a ranking signal – it is the qualification for being included in AI-generated answers at all. Author credentials, original research, linked sources, and topical depth are now dual-purpose investments.

Specificity over generality. AI systems select content that provides extractable facts – numbers, definitions, named frameworks, concrete comparisons. Content that gestures vaguely at a topic (“there are many factors to consider”) gets skipped in favor of content that states something specific and citable. We have written previously about how LLMs index and use content – the same accessibility and structural principles that help AI crawlers parse your pages also make your content more citation-worthy.

Why Are Healthcare and Higher Education Hit Hardest?

Because AI Overviews appear at disproportionately high rates for the query types these industries depend on – and the consequences of being absent or misrepresented are far more serious than lost traffic.

Conductor’s Q1 2026 analysis of 21.9 million searches found that healthcare queries trigger AI Overviews at a rate of 48.75% – nearly double the overall average of 25%. Technology queries trigger at roughly 30%. For healthcare organizations and universities, AI is already mediating nearly half the informational queries that drive patient acquisition and enrollment.

The real-world impact is already measurable. U.S. News reported in March 2026 that nearly 80% of people searching for degree information read Google’s AI Overviews, and many never click through to an institution’s website. The University of Maryland Global Campus responded by using AEO and GEO techniques to revise its degree pages and A/B test FAQ-style content. Johnson County Community College found that while AI-driven traffic represents less than 1% of its website visitors, engagement from that group is 59% above its site-wide average – suggesting AI-referred visitors arrive further along in their decision-making process.

For healthcare, the stakes go beyond enrollment. When AI engines synthesize clinical information, the accuracy of that synthesis depends on the quality and structure of the sources available. Organizations that have not optimized their content for AI citation are not just losing visibility – they are ceding authority over how their expertise gets represented to patients who increasingly trust AI-generated answers.

What Does the HubSpot Collapse Tell Us About This Shift?

That traffic built on loosely related content is structurally fragile in an AI-mediated search environment.

Multiple industry analyses documented an approximately 80% traffic drop across HubSpot’s blog properties as AI Overviews began answering the high-funnel informational queries that had driven HubSpot’s organic growth for over a decade. Pages about “famous sales quotes” and “cover letter examples” had driven enormous traffic but had minimal connection to HubSpot’s core CRM platform. When Google’s algorithm update prioritized content closely tied to a website’s core expertise, and AI Overviews began answering those generic queries directly, the traffic evaporated.

The lesson is not that content marketing failed. It is that content disconnected from your organization’s core authority is exactly the kind of content AI systems will summarize without ever sending a visitor your way. In our GEO optimization Q&A, we outline why organizations should start with their highest-authority content when optimizing for AI visibility rather than trying to cover every possible keyword.

For organizations in regulated industries – where your content is tightly tied to your institutional expertise by design – this is actually an advantage. A hospital publishing evidence-based patient education content is inherently closer to citation-ready than a SaaS company publishing tangentially related blog posts for traffic volume. The structural alignment is already there. What is often missing is the formatting and schema work that makes it extractable.

What Should Content Teams Do First?

Start with what you already have. The gap between SEO-optimized content and citation-ready content is usually structural, not substantive.

1. Audit your top 20 pages for extractability. Read the first paragraph of each section in isolation. Does it directly answer a question someone would ask an AI tool? If not, restructure it. AI systems and Google’s AI Overviews pull from the opening sentences of well-structured sections – bury your answer three paragraphs deep and it will not get cited.

2. Add the schema AI systems actually use. Implement FAQPage, Organization, Article, and author schema across your priority content. BrightEdge found that sites implementing structured data and FAQ blocks saw a 44% increase in AI search citations. Author schema is especially high-impact: websites with author schema are 3x more likely to appear in AI answers.

3. Track AI visibility alongside traditional rankings. Oomph’s GEO Analytics and Reporting service configures tracking in GA4 and Google Search Console to monitor AI bot traffic and AI-generated search impressions that standard analytics miss. At minimum, create referral segments for chat.openai.com, perplexity.ai, and other AI platforms, and watch for the signature pattern of rising impressions with declining clicks – the clearest signal that AI is summarizing your content without sending traffic.

The organizations that will maintain visibility over the next two years are not the ones choosing between SEO and GEO. They are the ones building content that works across both discovery surfaces from the start – structured for extraction, grounded in genuine expertise, and specific enough that AI systems treat it as source material rather than background noise.

That is not a new content strategy. It is the old one, built to the standard the new environment actually requires.

Summary

Most content strategies optimize for one outcome: ranking. Ranking is only half the visibility equation now. Citation-Ready Content Architecture, developed at Oomph, helps organizations build content that performs across traditional search results and AI-generated answers simultaneously. It rests on three principles – modular structure, demonstrated authority, and extractable specificity – and we apply it with clients in healthcare, higher education, and government where being cited accurately is as important as being found.

This crystallized during a client conversation earlier this year. We were looking at their analytics – a major healthcare organization – and the pattern was striking. Impressions were climbing. Rankings were stable. But clicks were dropping steadily, month over month. The content was being surfaced by Google, but patients were getting their answers from AI Overviews without ever visiting the site.

That’s a visibility problem most of us weren’t trained to solve – and it requires a different content architecture.

Gartner predicts traditional search volume will drop 25% by the end of 2026 as users migrate to AI-powered answer engines. Ahrefs found that 80% of URLs cited by ChatGPT, Perplexity, and Copilot don’t rank in Google’s top 100 for the original query. And the Pew Research Center’s study of 68,879 actual Google searches found that only 8% of users clicked a traditional result when an AI Overview appeared, compared to 15% without one – roughly half the click-through rate.

Content that ranks and content that gets cited aren’t always the same – but they can be, if you build for both from the start. That’s Citation-Ready Content Architecture.

What Is Citation-Ready Content Architecture?

Citation-Ready Content Architecture is the practice of structuring digital content so it simultaneously ranks in traditional search engine results and gets extracted, synthesized, and cited by AI answer engines like ChatGPT, Google AI Overviews, and Perplexity. Developed by Oomph as a framework for regulated industries, it combines modular content structure, demonstrated authority signals, and extractable specificity into a unified content design principle – replacing the need to maintain separate SEO and GEO strategies.

The key word in that definition is “simultaneously.” That means content architecturally designed to work across every discovery surface – ranked results, AI summaries, voice assistants, whatever comes next – because the underlying structure supports all of them.

In our work with clients across healthcare, higher education, and government, we’ve found this transition isn’t a massive lift for organizations with strong content fundamentals. The gap between SEO-optimized and citation-ready content is structural, not substantive – it’s about how content is organized, not whether it’s good.

Why Do Organizations Need a New Content Architecture Now?

Information discovery has forked. Content built for only one path leaves visibility on the table.

Two parallel discovery systems now exist. Traditional search ranks your content in a list users scan. AI-powered answer engines synthesize information from multiple sources into a single response – often without the user ever clicking through to your site.

The research is unambiguous. The foundational Princeton GEO study demonstrated that content optimized for generative engines can boost visibility by up to 40% in AI responses. But it also showed that the most effective strategies vary by domain – what works for a law firm doesn’t necessarily work for a children’s hospital. A March 2026 study from researchers at the University of Tokyo found that structural optimization alone – independent of content changes – improved citation rates by 17.3% across six major generative engines.

The most striking finding: research from AirOps found that pages ranking number one in Google were cited by ChatGPT 3.5 times more often than pages outside the top 20. Strong SEO remains the foundation. Citation-ready architecture is what makes that foundation legible to AI systems too.

What Are the Three Principles of Citation-Ready Content?

The framework rests on three principles. Each serves both search engines and AI systems simultaneously – that dual purpose is the point.

Modular structure

AI systems don’t read your article start to finish and decide whether to cite the whole thing. They extract passages – a definition, a data point, a direct answer to a specific question. Content with clear headings, self-contained sections, and answer-first paragraphs gives both search algorithms and AI systems clean material to work with.

We’ve written about how LLMs index and use content – and the takeaway is that the same accessibility principles that help AI crawlers parse your pages also make your content more citation-worthy. Semantic HTML, logical heading hierarchies, and sections that can stand on their own aren’t new concepts. They’re just worth more now than they’ve ever been.

Demonstrated authority

Being cited by AI systems has become a meaningful competitive advantage. BrightEdge found that sites earning citations inside AI Overviews see CTR increases of up to 35% compared to traditional organic rankings alone. Websites with author schema are 3x more likely to appear in AI answers, and sites implementing structured data and FAQ blocks saw a 44% increase in AI search citations.

In practice, demonstrated authority means: Author credentials on every piece. Original data and research when you have it. Linked sources for every claim. Topical depth across related content – not one-off articles, but interconnected clusters that demonstrate sustained expertise.

Authority isn’t just a ranking signal – it’s the entry qualification for AI inclusion.

Extractable specificity

This is the one that separates citation-ready content from content that’s merely well-written. AI systems select content that provides extractable facts – numbers, definitions, named frameworks, concrete comparisons. Content that gestures at a topic (“there are many factors to consider”) gets skipped in favor of content that states something specific and citable.

The Princeton study found that adding statistics to content improved AI visibility by 41%, and citing credible sources improved visibility by 115% for lower-ranked pages. That 115% figure is significant: it means content that isn’t winning the traditional ranking game can still earn AI citations by being specific and well-sourced.

How Does This Apply Differently in Regulated Industries?

For regulated industries, the stakes are higher and the timeline compressed – but the structural fit is actually better.

Conductor’s Q1 2026 analysis of 21.9 million searches found that healthcare queries trigger AI Overviews at a rate of 48.75% – nearly double the overall average. For healthcare organizations and universities, AI is already mediating close to half the informational queries that drive patient acquisition and enrollment.

The structural advantage for regulated industries is real. Organizations in regulated industries – healthcare systems, universities, government agencies – produce content that’s inherently tied to their institutional expertise. A hospital publishing evidence-based patient education content is structurally closer to citation-ready than a SaaS company publishing tangentially related blog posts for keyword volume. The authority is real. The specificity is built in by the nature of the content. What’s typically missing is the formatting and schema work that makes it extractable.

When we optimize content for GEO, the biggest wins often come from restructuring content that already exists – not creating new content from scratch.

What Should You Do First to Make Your Content Citation-Ready?

Start with what you have. The gap is almost always structural, not substantive.

- Audit your top 20 pages for extractability. Read the first paragraph of each section in isolation. Does it directly answer a question someone would ask an AI tool? If it doesn’t, restructure it. AI systems pull from the opening sentences of well-structured sections. Bury your answer three paragraphs in and it won’t get cited.

- Implement the schema that AI systems actually use. FAQPage, Organization, Article, and author schema across your priority content. Author schema is especially high-impact – BrightEdge’s research shows it triples your likelihood of appearing in AI answers.

- Track AI visibility alongside traditional rankings. Oomph’s GEO Analytics and Reporting service configures tracking in GA4 and Google Search Console to monitor AI bot traffic and AI-generated search impressions. At minimum, watch for the pattern of rising impressions with declining clicks – that’s the clearest signal that AI is summarizing your content without sending visitors.

- Build for reuse from the start. Every new piece of content should include at least one standalone definition, one specific data point, and one direct answer to a question your audience would ask an AI tool. Make it easy for AI systems to cite you. That’s the architecture.

In 20 years of building digital experiences, I’ve watched a handful of shifts fundamentally change how content needs to be structured. Mobile was one. Accessibility-first was another. The shift to AI-mediated discovery is the next.

Citation-Ready Content Architecture isn’t a bolt-on to your existing strategy – it’s the design principle that makes your existing strategy work across today’s fragmented discovery environment. Organizations that build for it now will compound that advantage as AI-mediated search grows. Those that wait will be optimizing for a world that has already moved on.

We’re helping clients across healthcare, higher education, and government make this shift. If your analytics show that pattern – impressions climbing, clicks dropping – start here.

As direct website traffic decreases and LLMs slurp up text from multiple sources to mix together and redistribute to users, it has never been more important to maintain high-quality online content. A ROT analysis — which stands for Redundant, Obsolete, Trivial — is a framework through which we can evaluate site content to improve it for usability, SEO, retrieval, and GEO.

This is a flexible exercise that can apply to a variety of digital properties: web pages, PDFs, intranets, social media pages, call center databases, support knowledgebases… Anywhere that you, as an organization, are speaking to your audience, you have an opportunity to share knowledge, build trust, and solidify your brand image.

Similarly, ROTten content can mislead users, seed doubt, and damage your reputation.

When you use a ROT analysis to kickstart a content clean-up project, you’re ensuring that users and bots alike find only your latest, clearest, most accurate and relevant information. When done properly, it can even set up your team for better content production and management in the future.

How Oomph Approaches Content ROT Analyses

Every ROT analysis looks a little different depending on the industry, content, and what a particular audience needs.

Make a Plan

Before jumping into dashboards and spreadsheets, we start with a conversation. With any project, we need to understand what problems your organization needs to solve: What’s important to you and your users? Where are you struggling? This is our chance to understand the why behind your content.

As we learn more about what you need, we’ll define what ROT is for your organization. What existing policies do you have in place around archiving old or outdated content? If you don’t have policies, what makes sense for you? What key user journeys should the analysis focus on? We’ll answer these questions and more to make sure we’re going into the analysis with a clear vision of what your content should look like so we can see where it’s missing the mark.

Find the ROT

Let’s get into what ROT looks like specifically and where we look for it.

Redundant means the content communicates information in more than one place. This can result in an inefficient information architecture and messy user paths. There are times duplicate content can be helpful, like when separate task flows require some of the same information. That’s why it’s important to know upfront what journeys are most important to prioritize. In these cases, when the same content shows up in multiple places across a website or app, it’s important to have a method for keeping all content in sync. If it’s possible to edit this content in a single place while distributing it across multiple pages, that can be a great method for maintaining a single source of truth.

Redundant might also refer to several articles written over time that deal with the same topics in similar ways. This can result in the newest content on the topic having its SEO/GEO cannibalized by older content on the same topic. Users might more easily find older content when you want them to find the latest.

Obsolete content includes outdated information, language, and (probably broken) links. This type of ROT is especially damaging when it’s related to products, services, or something users are trying to take action on. It’s important to keep in mind your entire digital landscape; Maybe you’ve updated the content on your main service page, but did you remember to update automated emails, support articles, and meta descriptions? What pages aren’t built directly into a user flow but can still be found by Google?

Consider whether it makes sense to archive or unpublish old content, like past news and events. And consider your audience: Is there a reason users would be looking for a historical record, and is that need strong enough to justify keeping it available? If you do choose to keep outdated information published, make sure that it’s clear to users that the content is old and consider providing a link to the latest version.

Trivial content can be harder to define and is highly subjective based on the organization. This might look like “fluff” pieces shared for the sake of SEO or maintaining a publishing schedule, or excessive marketing language that ultimately doesn’t serve you or your users. It might be low-traffic fine print details that apply to a specific audience who typically finds it another way. Maybe it’s content that is related to but outside of your core business function. You’ll need to make some decisions about what is important to you.

To find ROT, we’ll use a variety of collection and measurement tools. SortSite, Screaming Frog, and Siteimprove can locate broken links, orphaned pages, and other SEO issues. Google Analytics, Hotjar, Contentsquare, and MS Clarity can show common user flows and help identify trivial content. Data from these tools can also prioritize the analysis by surfacing what content is most important to users. If a page gets a lot of traffic, we know that it needs to be clear, up-to-date, and accurate. If a page isn’t visited much, we need to ask whether it should be more highly trafficked, consolidated with higher performing content, or removed.

Deliverables and Next Steps

After all this sorting and evaluating, you might be wondering what you’ll tangibly get out of the process. We know content teams are busy, and going through a review can feel like adding more work to the pile. How can we help prioritize meaningful progress here?

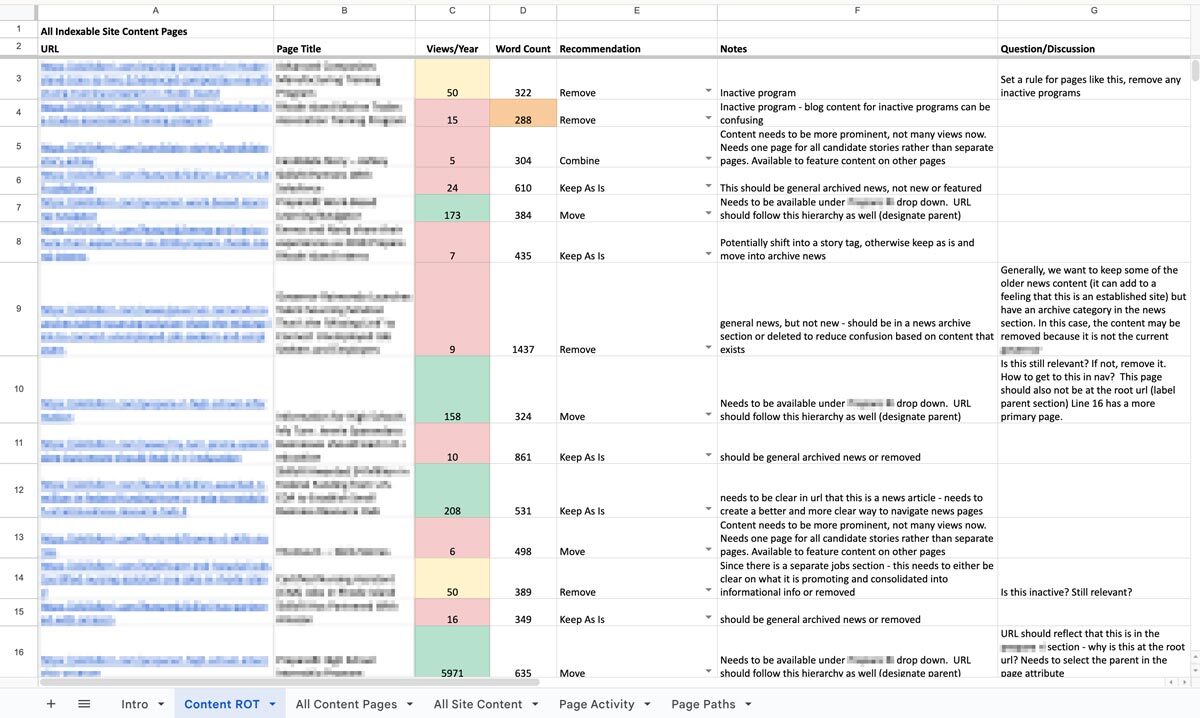

The big outcome is one of my personal favorites: a clean, annotated, actionable spreadsheet. Specifically, we’ll put together an audit of your content with links, page titles, notes on whether the content falls into any of the three ROT categories, and what to do about it: keep, modify, combine, or delete. Depending on the tools your content team uses or what you are willing to subscribe to, we might prepare dashboards and reports directly within an app that your team can use as an ongoing progress tracker. Wherever this list of to-do’s lives, we’ll help you prioritize it so you can start ticking off the most crucial items. Depending on what we decided in early scoping agreements, we can even help work through some high-impact issues, like bulk deleting content, suggesting rewrites, and fixing broken links.

We can also set up an ongoing content hygiene plan. While a dedicated content ROT analysis is a great way to identify and work through issues, an effective content plan should prevent ROT as much as possible and reduce the need for a large effort in the future. This might involve setting up policies, practices, and tools to guide future content management. We’ll help you find ways to see the bigger picture when updating or developing new content to make sure all pieces are accounted for. And when ROT falls through the cracks, you’ll have a plan to regularly review site content, setting ahead of time the when, what, and who.

One Piece in the Puzzle of Strong Content

As we continue to inspect the quality of your website and other digital properties, we can use this ROT analysis as a jumping off point. The initial audit may lead directly into a deeper content audit to evaluate URL paths, heading usage, performance metrics, reading level, and more. As we consider reworking, combining, and cutting entire pages, we may find the need to restructure your information architecture and taxonomy structures, in part or in whole, informed by research exercises like card sorts and tree tests. Depending on what we’ve found in the existing content and how it needs to change, we might suggest changes to your content model, adding, modifying, or removing content types and the relationships between them.

A content ROT analysis is a flexible and fruitful way to take a fresh look at your content ecosystem. If you need help getting started, let us know. We’d love to dig in with you!

Compliance with the California Consumer Privacy Act (CCPA), as amended by the California Privacy Rights Act (CPRA), is a mandatory legal obligation for covered businesses, with significantly increased financial and operational risks starting in 2025.

The Critical Risk: Escalating Fines and Penalties

As of January 1, 2025, the California Privacy Protection Agency (CPPA) increased monetary thresholds and fines to align with the Consumer Price Index.

- Civil Penalties: Businesses face up to $2,663 per unintentional violation and up to $7,988 per intentional violation or those involving minors.

- No Total Cap: Because each individual consumer affected by a breach or non-compliant practice can count as a separate violation, total fines for large-scale data incidents can quickly reach millions of dollars.

- Private Right of Action: Consumers can sue for statutory damages between $107 and $799 per incident (or actual damages) following a data breach involving unencrypted personal data.

Key Deadlines and New Requirements (2026–2028)

Regulators have moved from a passive to an active enforcement model, removing the mandatory “grace period” for fixing violations before penalties are applied.

- Mandatory Risk Assessments (Effective Jan 1, 2026): Businesses must conduct risk assessments for “significant risk” processing, such as selling/sharing personal data or using sensitive information.

- Automated Decisionmaking (ADMT): New requirements for technologies that replace human decision-making (e.g., for credit or employment) go into effect, with a compliance deadline of January 1, 2027.

- Mandatory Reporting: Organizations must begin reporting their risk assessment activities to the CPPA by April 1, 2028.

Does This Apply to My Business?

A for-profit business must comply if it does business in California and meets any of the following:

- Gross annual revenue exceeds $26.625 million (updated for 2025).

- Buys, sells, or shares the personal information of 100,000 or more California residents or households.

- Derives 50% or more of its annual revenue from selling or sharing personal data.

Operational Impact of Non-Compliance

Beyond fines, non-compliance can lead to court-ordered injunctions, mandatory regular audits, and the required deletion of valuable data assets. It also risks significant reputational damage and customer churn, as modern consumers increasingly prioritize data security when choosing where to spend.

Is your website ready for California’s evolving privacy standards? Non-compliance isn’t just a legal risk — it’s a business one that can result in millions in fines, mandatory audits, and lasting reputational damage. Our team helps organizations like yours navigate complex regulatory requirements with confidence, so you can focus on what matters most. Talk to our team today.

Selecting a content management system in healthcare is no longer a purely technical decision. In today’s environment, a CMS directly impacts compliance, accessibility, speed to publish, and ultimately, trust. Healthcare organizations are under growing pressure to deliver accurate, timely information across multiple digital channels, while meeting strict regulatory and accessibility requirements. The CMS at the center of that effort needs to support far more than page updates.

Why Healthcare CMS Decisions Are Uniquely Complex

Healthcare websites serve a wide range of audiences, from patients and caregivers to providers, partners, and regulators. Content must be clear, accurate, and easy to update—often by multiple teams—without introducing risk.

At the same time, healthcare organizations face constraints that many other industries don’t. Accessibility standards, privacy expectations, and governance requirements are non-negotiable.

A CMS that lacks flexibility or control quickly becomes a bottleneck.

“The healthcare content management system market is projected to grow to over $61 billion by 2031, underscoring how healthcare organizations are prioritizing modern, scalable digital platforms to support compliance, multi-channel delivery, and governance.”

According to Mordor Intelligence

What Healthcare Teams Should Prioritize

- A healthcare CMS must support strong governance without slowing teams down. Role-based permissions, approval workflows, and auditability are essential to ensure content accuracy and accountability.

- Accessibility also needs to be built into everyday publishing, not treated as an afterthought. The CMS should make it easy for teams to maintain WCAG-compliant content as sites evolve.

- Equally important is the ability to scale across channels. Healthcare content increasingly lives beyond the website—patient portals, mobile apps, email, and emerging digital touchpoints all require consistency. Managing this content from a single system reduces duplication and risk.

Flexibility Without Compromising Security

Healthcare organizations often rely on complex digital ecosystems, including EHRs, portals, analytics tools, and consent platforms. A modern CMS should integrate cleanly with these systems rather than trying to replace them.

Flexibility matters, but not at the expense of security. The right CMS supports modular integration while keeping sensitive data protected and clearly separated from content operations.

Planning For Change, Not Just Launch

CMS selection shouldn’t be based solely on current needs. Healthcare regulations, digital expectations, and technologies continue to evolve. The most effective platforms are designed to adapt without requiring frequent replatforming.

This means supporting incremental improvements, phased rollouts, and long-term scalability—so teams can modernize at a pace that aligns with organizational priorities.

The Role Of Modern, Composable CMS Platforms

Composable CMS platforms are gaining traction in healthcare because they treat content as structured data rather than static pages. This approach supports reuse, consistency, and omnichannel delivery while maintaining governance.

For healthcare teams, this translates into faster publishing, fewer bottlenecks, and greater confidence in content accuracy without sacrificing compliance.

What This Means For Healthcare Teams

Healthcare CMS selection is about more than choosing a tool. It’s about enabling teams to communicate clearly, operate efficiently, and adapt responsibly in a complex digital landscape.

Organizations that prioritize governance, accessibility, and flexibility position themselves to deliver trusted digital experiences today and in the years ahead.

Ready to Evaluate Your Healthcare CMS? Our team helps healthcare organizations navigate complex CMS decisions with a focus on governance, accessibility, and long-term scalability. Let’s talk about what the right platform looks like for your organization.

To avoid significant financial penalties, which increased on January 1, 2025 to up to $7,988 per intentional violation, your website must function as a compliant interface for consumer privacy rights. Use this checklist to assess your current standing.

1. Mandatory Homepage Links

- “Do Not Sell or Share My Personal Information”: A clear and conspicuous link must be in the footer or header if you sell or share data for targeted advertising. This includes:

- Retargeting Ads: Uploading your email list to Facebook (Meta), Google, or LinkedIn to show ads to those specific users or to find “Lookalike” audiences.

- Data Brokerage: Selling your email list to another company or “renting” it out for their own marketing.

- Third-Party Analytics: Sharing email-linked identifiers with ad networks that track users across multiple unrelated websites.

- “Limit the Use of My Sensitive Personal Information”: Required if you collect sensitive data (e.g., precise geolocation, health info, or race) for purposes beyond providing the core service.

- Alternative Option: You may use a single, combined link labeled “Your Privacy Choices” or “Your California Privacy Choices” that includes an icon if desired.

2. Automated Privacy Signals (Global Privacy Control)

- GPC Detection: Your website must automatically detect and honor “Global Privacy Control” (GPC) signals from user browsers (e.g., Brave, DuckDuckGo) as a valid opt-out request.

- Status Confirmation: As of January 1, 2026, you must display a clear confirmation to the user, such as a message stating “Opt-Out Request Honored,” when a GPC signal is detected.

3. Notice at Collection

- Timely Disclosure: You must provide a notice at or before the point of collection (e.g., on a sign-up form or via a cookie banner).

- Content Requirements: The notice must list categories of personal and sensitive info collected, the specific purpose for each, and how long each category will be retained.

4. Consumer Rights Intake (DSARs)

- Dual Methods: You must provide at least two designated methods for submitting requests (e.g., a web form and a toll-free number).

- Verification: Establish a process to verify a consumer’s identity without requiring them to create a new account solely for the request.

5. Technical & Policy Maintenance

- Accessibility: All notices must follow Web Content Accessibility Guidelines (WCAG) and be available in every language in which you conduct business.

- Annual Update: The online Privacy Policy must be reviewed and updated at least once every 12 months.

- No “Dark Patterns”: Ensure the user interface is symmetrical; for example, it should not be significantly harder to “Opt-Out” than it is to “Opt-In”.

Is your website one missing link or undetected signal away from a costly CCPA violation? Oomph’s team can walk you through a compliance audit, identify gaps in your current setup, and help you implement the technical and content updates needed to protect your organization. Get in touch with us today to book your CCPA compliance call.

Website accessibility has shifted from a “best practice” to a strictly codified legal requirement. Federal and state regulations have eliminated previous ambiguities, making WCAG 2.1 Level AA the mandatory technical standard for digital content. With updated deadlines now in place, organizations have a renewed window to get it right.

1. The Compliance Deadline: What’s Changed

The U.S. Department of Justice (DOJ) finalized a rule under Title II of the ADA that sets a firm compliance deadline for many entities:

- April 24, 2027: Deadline for public entities (and many private partners) serving populations of 50,000 or more to achieve full WCAG 2.1 Level AA conformance.

- April 26, 2028: Deadline for smaller entities.

- Private Sector Impact: While the DOJ rule focuses on public entities, it solidifies WCAG 2.1 AA as the de-facto legal standard for private businesses in Title III lawsuits, which saw a 102% increase in recent years.

2. Why WCAG 2.1 Level AA?

Unlike older versions, WCAG 2.1 includes 17 additional criteria specifically designed for mobile accessibility and users with cognitive disabilities. Compliance is measured by the “POUR” Principles:

- Perceivable: Users must be able to see or hear content (e.g., Alt-Text for images, captions for video).

- Operable: The site must work without a mouse (e.g., Keyboard-only navigation, no keyboard traps).

- Understandable: Content must be predictable with clear error messaging on forms.

- Robust: Code must be “clean” enough to work with all current and future assistive technologies, like screen readers.

3. Compliance Risks to Keep in Mind

- No “Grandfathering” for New Content: Any digital asset (PDFs, videos, or web pages) posted after the compliance deadline must be compliant from day one.

- Vendor Liability: Business owners are legally responsible for their website’s accessibility, even if they use third-party platforms or templates.

- Inadequacy of “Overlay” Widgets: The DOJ has clarified that automated widgets or “overlays” alone cannot guarantee ADA compliance; true accessibility requires fixing the underlying code.

- California-Specific Penalties: Under California’s Unruh Act, businesses can face statutory damages of $4,000+ per violation in addition to federal ADA settlements.

4. Future-Proofing: Looking Toward WCAG 3.0

While WCAG 2.1/2.2 is the current law, WCAG 3.0 is in development (expected no earlier than 2028). It will move from a pass/fail model to a Bronze, Silver, and Gold scoring system. Achieving WCAG 2.1 Level AA now effectively places an organization at the “Bronze” level, providing a solid foundation for future shifts.

Is your website ready for the April 2027 deadline? Achieving WCAG 2.1 Level AA compliance requires more than a quick fix. It means addressing the underlying code, auditing every digital asset, and building accessibility into your process from the ground up. Whether you’re starting an audit, planning remediation, or building something new, get in touch with our team to start the conversation.

Contentful is no longer just an alternative CMS—it’s become a foundational platform for organizations navigating complexity, regulation, and rapid digital change. In 2026, the question isn’t what is Contentful? It’s why are so many organizations rebuilding their digital ecosystems around it? The answer lies in how digital experiences are built, managed, and scaled today.

Contentful Is Built for Systems, Not Pages

Traditional CMS platforms were designed around pages and templates. That model breaks down when content needs to move faster, live in more places, and remain consistent across teams and channels.

Contentful takes a different approach. It treats content as structured data, not static pages. That means teams create content once and deliver it anywhere—websites, apps, portals, email, or future channels that don’t yet exist.

In 2026, this isn’t a “nice to have.” It’s how modern digital platforms operate.

Composable Architecture Is Now the Default

Composable architecture has moved from trend to standard. Organizations want the freedom to choose best-in-class tools without being locked into monolithic platforms.

Contentful fits cleanly into this model. It integrates with design systems, analytics platforms, personalization tools, consent managers, and AI services through APIs—without forcing teams into rigid workflows.

This flexibility allows organizations to evolve their stack over time instead of rebuilding every few years.

AI Depends on Structured Content

AI-driven experiences are only as good as the content behind them. In 2026, organizations are using AI to support personalization, search, localization, content optimization, and automation.

Contentful’s structured content model makes this possible. Clean, well-defined content enables AI tools to understand, reuse, and adapt content accurately—without introducing risk or inconsistency.

For teams exploring AI responsibly, Contentful provides the infrastructure needed to scale with confidence.

Governance and Compliance Are Built In, Not Bolted On

For regulated and mission-driven organizations, governance isn’t optional. Publishing controls, audit trails, permissions, and review workflows are essential.

Contentful supports these needs at scale. Teams can define roles, control who edits or publishes content, and maintain visibility into changes across environments. This level of governance is critical in industries like healthcare, legal, finance, and the public sector.

In 2026, compliance isn’t something teams add later—it’s designed into the platform from day one.

Marketing and Development Work Better Together

One of Contentful’s biggest advantages is how it aligns marketing and engineering teams. Developers maintain design systems and integrations. Content teams manage content without breaking layouts or workflows.

This separation of concerns reduces friction, speeds up delivery, and minimizes production errors—especially as digital ecosystems grow more complex.

Ready to explore what Contentful could do for your organization? Whether you’re evaluating platforms, planning a migration, or looking to optimize your current setup, Oomph can help you build a content infrastructure designed for the long term. Let’s talk about your next move.

Why Organizations Move to Contentful Now

Organizations typically migrate to Contentful when legacy systems start holding them back. Common triggers include:

- Slow publishing workflows

- Heavy developer dependency

- Difficulty scaling across channels

- Growing compliance requirements

- The need to support AI and personalization

In 2026, Contentful isn’t chosen because it’s new. It’s chosen because it’s resilient.

For organizations new to the platform, getting started doesn’t have to mean a complete rebuild. Oomph’s Contentful Kickstart Package helps teams move from decision to deployment with a structured, low-risk approach—giving you the foundation to scale as your needs evolve.

The Takeaway

Contentful has evolved alongside the modern digital landscape. It’s not just a CMS—it’s a content platform designed for scale, governance, and change.