Generative Engine Optimization (GEO) is making organizations scramble — our clients have been asking “Are we ready for the new ways LLMs crawl, index, and return content to users? Does our site support evolving GEO best practices? What can we do to boost results and citations?”

Large language models (LLMs) and the services that power AI summaries don’t “think” like humans but they do perform similar actions. They seek content, split it into memorable chunks, and rank the chunks for trust and accuracy. If pages use semantic HTML, include facts and cite sources, and include structured metadata, AI crawlers and retrieval systems will find, store, and reproduce content accurately. That improves your chance of being cited correctly in AI overviews.

While GEO has disrupted the way people use search engines, the fundamentals of SEO and digital accessibility continue to be strong indicators of content performance in LLM search results. Making content understandable, usable, and memorable for humans also has benefits for LLMs and GEO.

How LLM systems (and AI-driven overviews) get their facts

Understanding how LLMs crawl, process, and retrieve web content helps us understand why semantic structure and accessibility best practices have a positive effect. When an AI system generates an answer that cites the web, several distinct back-end steps usually happen:

- Crawling — Bots visit URLs and download page content. Some crawlers execute javascript like a browser (Googlebot) while others prefer raw HTML and limit their rendering.

- Chunking — Large documents are split into small, logical “chunks” of paragraphs, sections, or other units. These chunks are the pieces that are later retrieved for an answer. How a page’s content is structured with headings, paragraphs, and lists determines the likely chunk boundaries for storage.

- Vectorization — Each chunk is then converted into a numeric vector that captures its semantic meaning. These embeddings live in a vector database and enable systems to find chunks quickly. The quality of the vector depends on the clarity of the chunk’s text.

- Indexing — Systems will store additional metadata (URL, title, headings, metadata) to filter and rank results. Structured data like schema metadata is especially valuable.

- Retrieval — A user asks a question or performs a search and the system retrieves the most semantically similar chunks via a vector search. It re-ranks those chunks using metadata and other signals and then composes its answer while citing sources (sometimes).

The Case for Human-Accessible Content

There are many more reasons why digital accessibility is simply the right thing to do. It turns out that in addition to boosting SEO, accessibility best practices help LLMs crawl, chunk, store, and retrieve content more accurately.

During retrieval, small errors like missing text, ambiguous links, or poor heading order can fail to expose the best chunks. Let’s dive into how this can happen and what common accessibility pitfalls contribute to the confusion.

For Content Teams — Authors, Writers, Editors

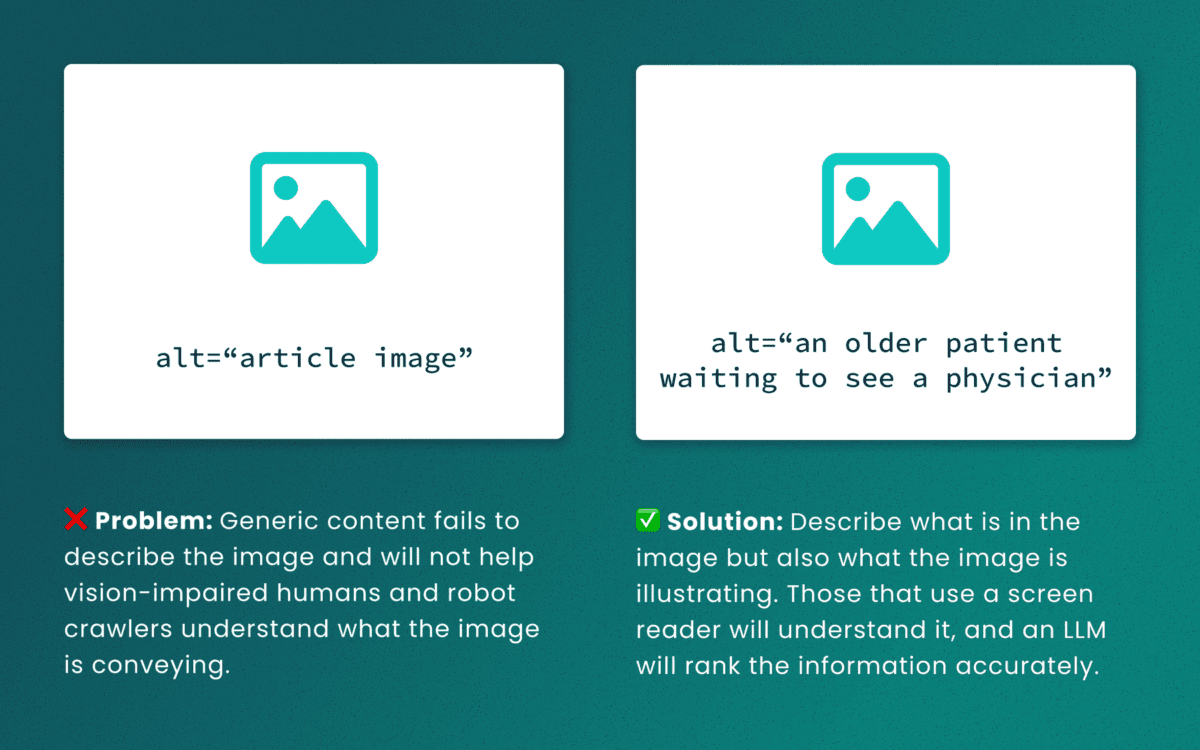

Lack of descriptive “alt” text

While some LLMs can employ machine-vision techniques to “see” images as a human would, descriptive alt text verifies what they are seeing and the context in which the image is relevant. The same best practices for describing images for people will help LLMs accurately understand the content.

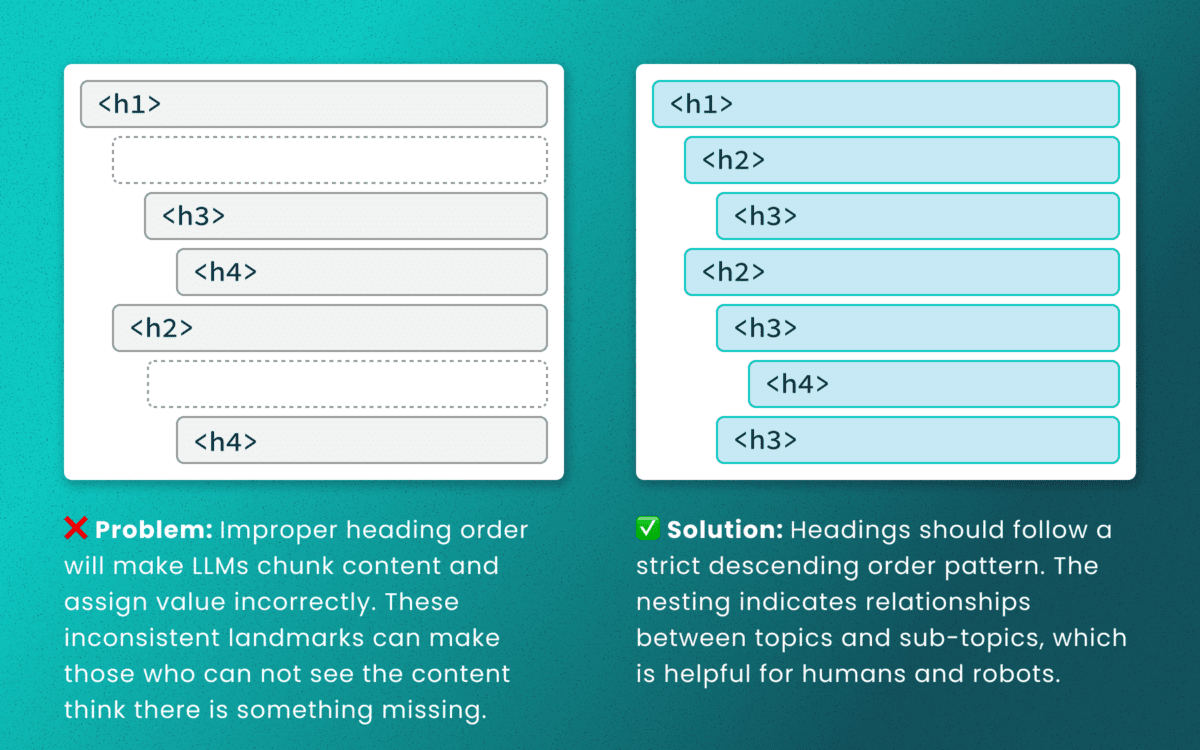

Out-of-order heading structures

Similar to semantic HTML, headings provide a clear outline of a page. Machines (and screen readers!) use heading structure to understand hierarchy and context. When a heading level skips from an <h2> to an <h4>, an LLM may fail to determine the proper relationship between content chunks. During retrieval, the model’s understanding is dictated by the flawed structure, not the content’s intrinsic importance. (Source: research thesis PDF, “Investigating Large Language Models ability to evaluate heading-related accessibility barriers”)

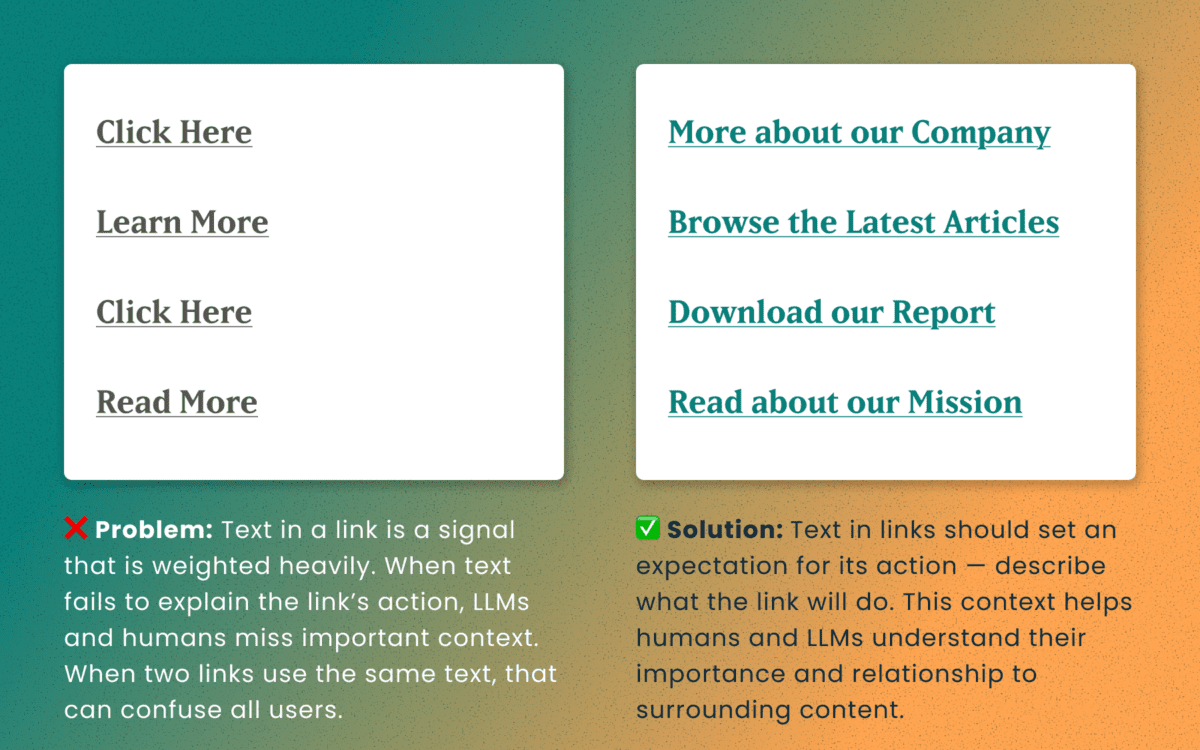

Descriptive and unique links

All of the accessibility barriers surrounding poor link practices affect how LLMs evaluate their importance. Link text is a short textual signal that is vectorized to make proper retrieval possible. Vague link text like “Click here” or “Learn More” does not provide valuable signals. In fact, the same “Learn More” text multiple times on a page can dilute the signals for the URLs they point to.

Using the same link text for more than one destination URLs creates a knowledge conflict. Like people, an LLM is subject to “anchoring bias,” which means it is likely to overweight the first link it processes and underweight or ignore the second, since they both have the same text signal.

Example of the duplicate link problem: <a href=“[URL-A]”>Duplicate Link Text</a>, and then later in the same article, <a href=“[URL-B]”>Duplicate Link Text</a>. Conversely, when the same URL is used more than once on a page, the same link text should be repeated exactly.

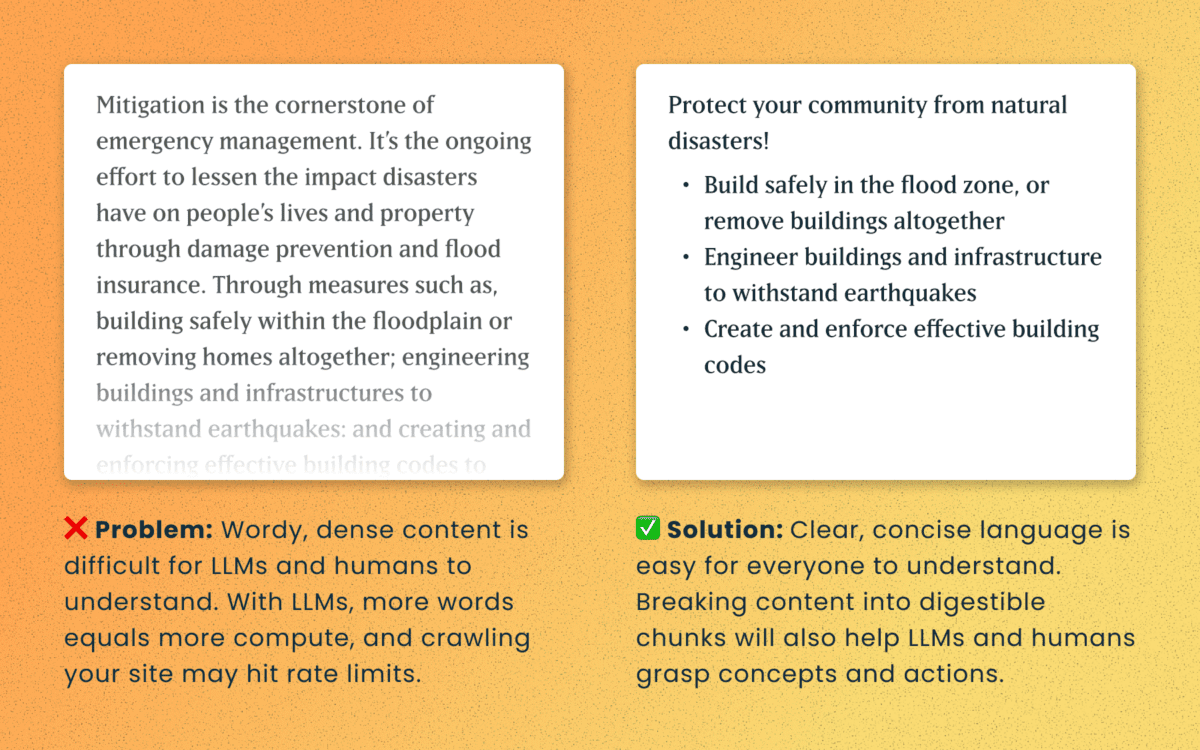

Logical order and readable content

Simple, direct sentences (one fact per sentence) produce cleaner embeddings for LLM retrieval. Human accessibility best practices of plain language and clear structure are the same practices that improve chunking and indexing for LLMs

For Technical Teams — IT, Developers, Engineers

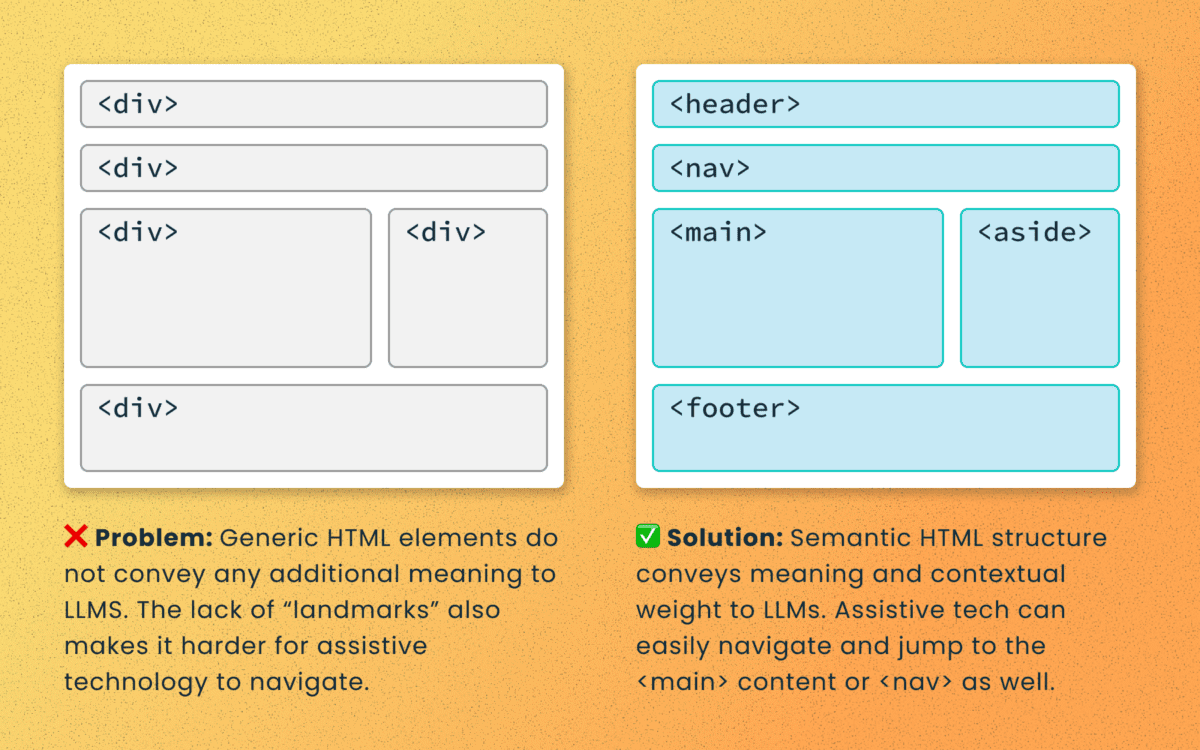

Poorly structured semantic HTML

Semantic elements (<article>, <nav>, <main>, <h1>, etc.) add context and suggest relative ranking weight. They make content boundaries explicit, which helps retrieval systems isolate your content from less important elements like ad slots or lists of related articles.

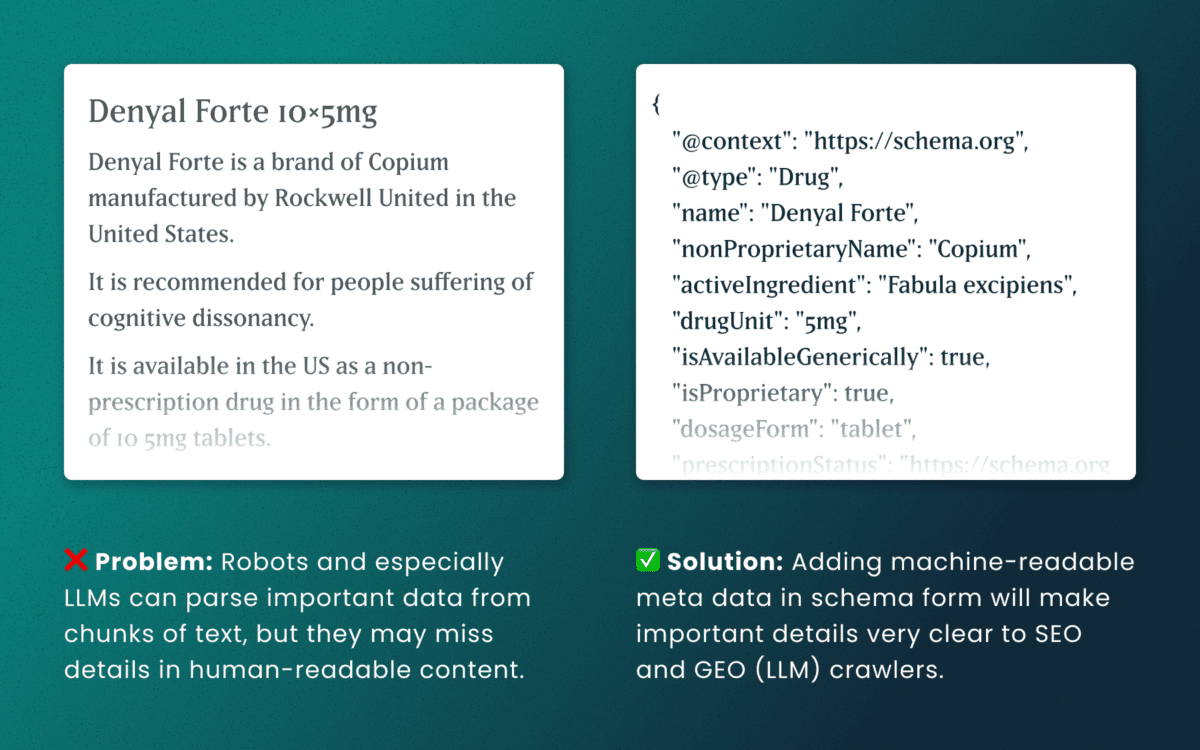

Lack of schema

This is technical and under the hood of your human-readable content. Machines love additional context and structured schema data is how facts are declared in code — product names, prices, event dates, authors, etc. Search engines have used schema for rich results and LLMs are no different. Right now, server-rendered schema data will guarantee the widest visibility, as not all crawlers execute client-side Javascript completely.

How to make accessibility even more actionable

The work of digital accessibility is often pushed to the bottom of the priority list. But once again, there are additional ways to frame this work as high value. While this work is beneficial for SEO, our recent research uncovers that it continues to be impactful in the new and evolving world of GEO.

If you need to frame an argument to those that control the investments of time and money, some talking points are:

- Accurate brand representation — Poor accessibility hides facts from LLMs. When customers ask an AI assistant for “best X for Y,” your content may not be shown — or worse, misrepresented. Fixing accessibility reduces brand risk and increases content authority.

- Engagement boost — Improvements that increase accurate citations and AI visibility can increase referral traffic, feature mentions, and lead quality. In a landscape where AI Answers are reducing click-through rates, keeping the traffic you have on your site for longer and building brand trust becomes vital.

- Increased exposure — Digital inclusion makes your content widely accessible to machines and the machines that assist humans. Think about a search engine as another human-assistive device, just like a keyboard or screen reader.

- Multi-pronged benefits — Accessibility improvement improves traditional SEO, can benefit mobile performance, and reduces the risks associated with accessibility compliance policies.

Staying steady in the storm

Let’s be clear — this summer was a “generative AI search freak out.” Content teams have scrambled to get smart about LLM-powered search quickly while search providers rolled out new tools and updates weekly. It’s been a tough ride in a rough sea of constant change.

To counter all that, know that the fundamentals are still strong. If your team has been using accessibility as a measure for content effectiveness and SEO discoverability, don’t stop now. If you haven’t yet started, this is one more reason to apply these principles tomorrow.

If you continue to have questions within this rapidly evolving landscape, talk to us about your questions around SEO, GEO, content strategy, and accessibility conformance. Ask about our training and documentation available for content teams.

Additional Reading

- AHREFs.com: Is SEO Dead? Real Data vs. Internet Hysteria

- SearchEngineJournal.com: How LLMs Interpret Content: How To Structure Information For AI Search

- InclusionHub.com: SEO and Web Accessibility: What You Need to Know (from 2020, but still relevant)

THE CHALLENGE

The Challenge

For caregivers, clinicians, and individuals impacted by dementia, finding reliable, up-to-date resources is often difficult. Many existing platforms were outdated, hard to navigate, and cluttered with static information that failed to reflect the latest research and best practices.

To address this, a team at the University of California, San Francisco (UCSF) secured grant funding to create a new, centralized digital resource for dementia care. This website would serve as a go-to hub for caregivers, healthcare professionals, and those living with dementia, making essential guidance, local support services, and educational tools more accessible and easier to use.

UCSF partnered with Oomph to develop a modern, scalable platform designed to improve content discovery, simplify search, and support long-term content growth.

OUR APPROACH

To ensure the new dementia care site was intuitive, structured, and easy to maintain, Oomph worked closely with UCSF to:

1. Build a Flexible and Organized Content System

With hundreds of resources ranging from clinical guides to local service listings, content needed to be structured for easy access. We:

- Developed a content model that allows UCSF to continuously expand and update information.

- Designed audience-specific pathways so caregivers, clinicians, and individuals with dementia can quickly find relevant content.

- Built an admin system that simplifies content management for UCSF’s team.

2. Optimize Search and Resource Navigation

Given the depth of content, finding the right resources quickly was a priority. We:

- Built a location-based filtering system to help users find local dementia support services.

- Designed an intuitive search experience that prioritizes the most relevant resources.

- Created structured content relationships so users can easily explore related topics.

3. Introduce Video and Multimedia Features

To make the site more engaging, UCSF wanted to integrate video content as a core educational tool. We:

- Developed a featured video content block that highlights key dementia care topics.

- Ensured seamless integration of video alongside traditional text-based resources.

- Designed a flexible content structure that allows UCSF to scale its multimedia offerings over time.

THE RESULTS

A Smarter, More Accessible Dementia Care Resource

The new dementia care platform is a comprehensive digital tool designed to improve how caregivers and clinicians access critical information.

- One centralized hub for dementia care resources, all timely and up-to-date.

- Fast, intuitive navigation that allows users to find resources based on role and location.

- Optimized multimedia experience that integrates video education alongside traditional content.

- A scalable platform that UCSF can continue to expand as research and best practices evolve.

By focusing on content organization, searchability, and usability, Oomph delivered a digital hub that will support dementia care communities for years to come.

Helping Healthcare Organizations Build Digital Resources That Matter

For healthcare providers, research institutions, and public health organizations, a well-designed digital platform can be the difference between confusion and clarity, isolation and support. Let’s connect to see how we can help.